MDS Newsletter #41

Hey Folks,

Hope you guys had a great start to the week! Thanks for all the love you showed to our new ' Amazing People in Data' series, we have super interesting interviews lined up for you, till then stay tuned and enjoy this week's newsletter!😀

Amazing People in Data

Meet Dunith: a developer advocate at Startree data. In this interview, learn about some of the awesome things he’s working on Startree data, unbundling of the streaming stack, his advice to the budding writers, and his data journey so far!

Read the full story here.

Featured tools of the week

- Cascade is a no-code data transformation and automation toolkit built for the cloud, allowing ops teams and analysts to transform data and trigger without any code.

Cascade has raised a total of $5.3M in funding over 1 round. This was a Seed round raised on Nov 9, 2021. - Octolis is a SaaS to create a unified customer database, and synchronize it with your favorite tools. Octolis seeks to reconcile the benefits of tailor-made customer databases with those of off-the-shelf CDP / CRM solutions. Octolis works seamlessly on top of a database that belongs to our clients while giving full autonomy to the business teams.

Featured data stack of the week

Capdesk is a rapidly growing equity management platform that enables high-growth companies across Europe to keep track of their cap tables as they scale, with greater accuracy and efficiency. See how they have organised their data stack.

Good reads and resources

- Data & Analytics Regression Playbook: Make Your Data Work Harder…And Smarter!: With a potential recession lurking on the horizon, most companies will hunker down and resort to budget cuts. But if you are a data-first organization, you will see this as a business opportunity to leverage your data to “do more with less”. Most companies just do not know what to do with the wealth of data they have acquired. Here’s a Data and Analytics Recession Playbook playbook by Bill Schmarzo, of actions you can take today to make your data work harder, and smarter, in the mission to “do more with less.”

- Data Unicorns Are Rare, So Hire These 3 People Instead: An effective data architecture is the result of great collaboration between people who choose to specialize in one area of expertise. The question is whom should you hire first and why. Marie Lefevre wrote an article describing 3 main sets of skills that must be taken over by distinct individuals in a company; Data Engineers, Data Analysts, and Data Managers for building data architecture. She made an amazing analogy between the installation of plumbing facilities at a house and building a data architecture.

Journal

- How to create a Data Catalog, A step-by-step guide: Data is becoming more decentralized through concepts like the data mesh. As more teams outside of the data function start to use data in their day-to-day, different tables, dashboards, and definitions are being created at an almost exponential rate. Data catalogs are important because they help you organize your data whether you are working with structured or unstructured data. In this article, Etai Mizrahi describes 5 steps that teams need to take when creating a data catalog. According to him having a tool that can track, document, store, and provide metadata insights for systems beyond just your current data stack will help you identify your blind spots in your current architecture.

Community Speaks

This week's question: What are the major data management challenges hyper-scale companies face when using various combinations of cloud and on-premise data platforms?

You can answer it here.

Last week's question: Do you think the cost of a cloud data warehouse is a problem?

You will need to manage the usage very carefully in order to keep cost in check. Specifically for Snowflake, here are some notes that might help you. - Compute costs will be more than 90% of your total bill when you just get started. Storage is very cheap ( $40 -$46 per TB per month) - It is very easy for the compute bill to exponentially rise if you scale up the processors (virtual warehouses in snowflake) ( each increment in scaling up is at least double from current size. for example - XS to S size will be 2x) ( you can limit user's ability to scale up, you could limit user's ability to create new virtual warehouses, you can keep a monitor on the usage and automatically stop if a threshold reaches) - If you have data in the range of few 10s of GBs - XS size of the warehouse should suffice for processing - and even if you consider 5-8 hours of on-time each day - you're looking at approx. $450 (compute and storage combined)

- Bhavik Vadhia

Upcoming data summits and events

- Dremio is organizing the Data at the yard event on July 12th at 5:30 PM at Cedric's at the shed, New York.

Immerse yourself with Data Experts by joining us for an evening to Network, Socialize and Connect after the AWS NY Summit.

Register here.

MDS Jobs

- Secret Escapes is hiring an ‘Analytics Engineer’

Location - Remote, UK, Germany

Data Stack- Snowflake, Airflow, Tableau

Apply here - Betterment is hiring a ‘Data Engineer III’

Location- US

Data Stack- dbt, Snowflake, Higtouch, Segment

Apply here - Wind River is hiring a ‘Data Insight Architect’

Location- USA

Data Stack- Fivetran, Snowflake, dbt, Looker

Apply here

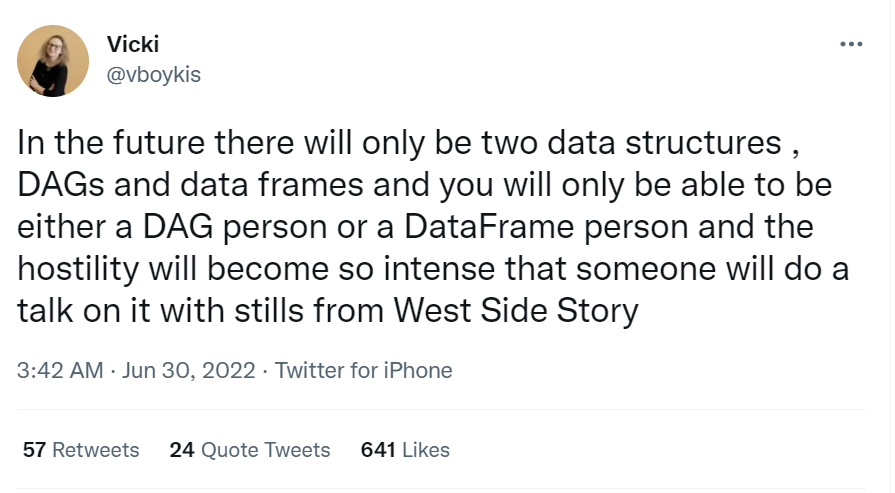

🔥 on Twitter

Just for fun😀

If you're enjoying this newsletter series (we know you do😉) then add this to your address book so you don't miss out on any data updates! 😄🧡

If you have any suggestions, want us to feature an article, or list a data engineering job, hit us up! We would love to include it in our next edition😎

About Moderndatastack.xyz - We're building a platform to bring together people in the data community to learn everything about building and operating a Modern Data Stack. It's pretty cool - do check it out :)