MDS Newsletter #66

🎄 As we celebrate the holidays and look back on the year, we wanted to take a moment to wish all of our readers a Merry Christmas 🎄

In the last edition of this year's newsletter, we've got you the inside scoop on the innovative tools and data stacks in the industry. We've also got articles and resources on the latest trends in data and artificial intelligence, as well as information on exciting events and job openings. Don't miss out on this opportunity to stay ahead of the curve. Join us now to see what we have in store for you! 🎁

Featured tools of the week

- Equalam: Equalum helps to stream data to the cloud in real time, powering real-time analytics, operations, data warehouse modernization, and more. It delivers critical business data across organizations with more speed, reliability, and accuracy.

Equalum has raised a total of $39M in funding over 5 rounds. Their latest funding was raised on Aug 8, 2022, from a Series C round. - Mode: Mode is the modern business intelligence platform that unites data teams with business teams to build analytics that drives business outcomes.

Mode Analytics has raised a total of $81M in funding over 7 rounds. Their latest funding was raised on Aug 6, 2020, from a Series D round.

Featured data stack of the week

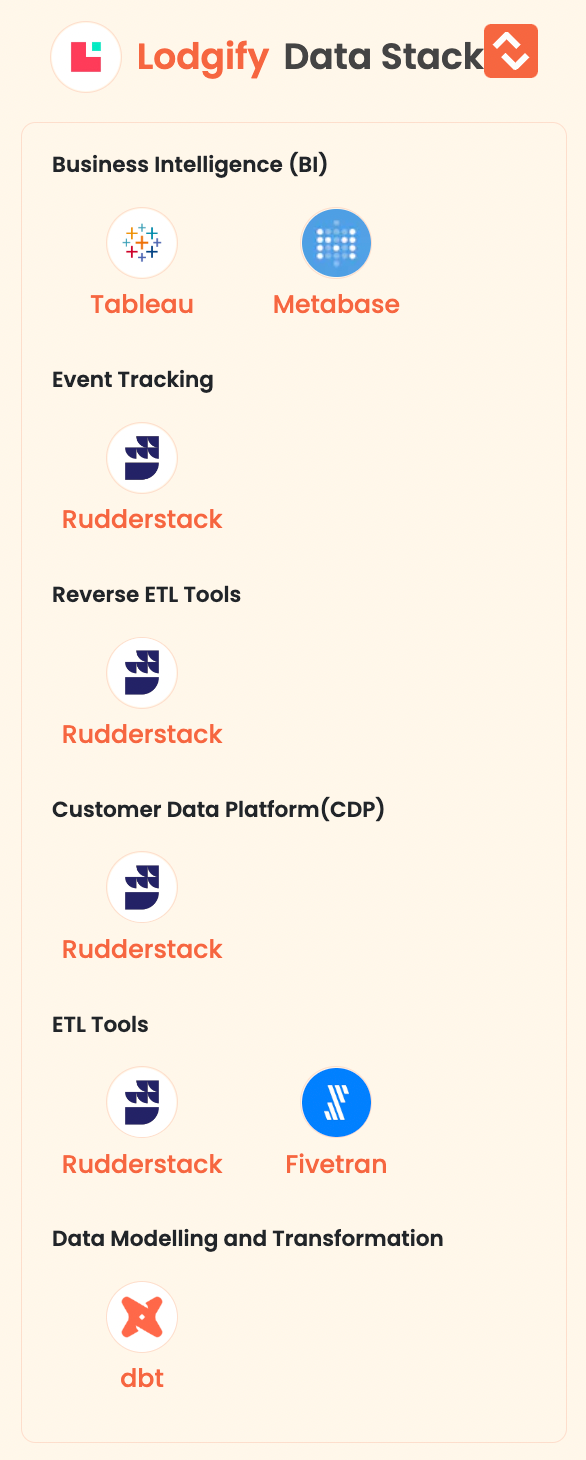

Lodgify: Lodgify is a SaaS startup for the hospitality industry focused on building vacation rental software that enables property owners and managers to independently manage and market their business online.

Here are the data tools they are using

Good reads and resources

- McDonald’s event-driven architecture: The data journey and how it works: This article discusses the implementation and operation of event-driven architecture at McDonald's Global Technology division. It covers the process of producing and consuming events through the use of producer and consumer software development kits (SDKs), schema validation to ensure the integrity of the data, and the use of a schema registry and dead-letter topic to rectify any errors or issues. The article also covers using an event gateway for events produced by external partners and implementing cluster autoscaling and domain-based sharding to support efficient scaling and minimize failures. Finally, the article discusses the potential for future expansion and development of the platform.

By Vamshi Krishna Komuravalli and Damian Sullivan

- How I Engineered my First Data Pipeline using only Open Source Software: This article is about the process of building a data pipeline for an eCommerce business using open-source software. The author initially considered using Airflow to extract data from MariaDB databases, transform it, and load it into a Postgres data warehouse, but ran into issues with encoding and the need to manually define tables in the data warehouse. They ultimately decided to use a combination of Airflow and CSV files to extract data from the MariaDB databases, transform it, and save it as a CSV file, and then used a separate process to load the CSV files into the Postgres data warehouse. The author also discussed the importance of considering whether to sync the source tables or a final view and the trade-offs between incremental and full refreshes of the data warehouse.

By DL

Upcoming data events, webinars, and summits

- Join the meet-up on Tuesday, 24 January at 6:00 pm at Mesh-AI HQ in Liverpool Street to get started with some of the hottest trends in Data for 2023. There will be two talk sessions.

Talk 1: Andrew Jones from GoCardless will be giving a talk on data contracts.

Talk 2: Steve Goodman from Tide will be giving a talk on machine learning (ML) explainability.

Register for the event here - Join the Data and AI Summit on February 2, 2023, from 9:00 am to 4:00 pm. This virtual event will feature more than 20 expert speakers from various organizations across Europe, sharing their insights on the latest developments in data and artificial intelligence within the enterprise.

Some of the topics that will be covered include Data Mesh, the role of a data-driven culture and organization, and how to utilize data and AI to deliver predictive solutions.

Register for the event here

MDS Jobs

- DataSF (City & County of San Francisco) is hiring an 'Analytics Engineer - Housing'

Location: San Francisco, CA (1 day in office, must be based in CA)

Stack: Snowflake, dbt

Apply here. - Top Hat is hiring an 'Analytics Engineer'

Location: Remote

Stack: Redshift, Airflow, dbt, Looker

Apply here - Bondora is hiring an 'Analytics Engineer'

Location: Estonia/Europe

Stack: Databricks, dbt core, Azure DevOps, Looker

Apply here

🔥 on Twitter

Feature engineering is the most important part of building great models for tabular data.

— Mark Tenenholtz (@marktenenholtz) December 22, 2022

I revisited dozens of tabular ML projects I worked on in the past and distilled the techniques I used down to repeatable, powerful processes.

Here's what I found:

Just for fun 😀

It's not always Old Man Jenkins... from dataengineering

Subscribe to our Newsletter, Follow us on Twitter and LinkedIn, and never miss data updates again.

What do you think about our weekly Newsletter?

Love it | It's great | Good | Okay-ish | Meh

If you have any suggestions, want us to feature an article, or list a data engineering job, hit us up! We would love to include it in our next edition😎

About Moderndatastack.xyz - We're building a platform to bring together people in the data community to learn everything about building and operating a Modern Data Stack. It's pretty cool - do check it out :)