MDS Newsletter #60

In this week's newsletter read about the process of implementing a simple data lakehouse system using open-source software, whether is it time to abandon the quest for a single source of data truth and how Airbyte is commoditizing data integration.

The Modern Data Show

S01 E10: Commoditizing data integration with Airbyte, Michel Tricot, Co-founder and CEO, Airbyte: When Michel and his team founded Airbyte back in 2020 there were already a ton of data integration tools out there and by 2020, it was a pretty mature space altogether. So what led them to start this company and what unique problem did they aim to address? To answer this, for this week's episode we have Michel Tricot, the co-founder and CEO of Airbyte. Listen Now👇

All episodes are available on Spotify, Apple Podcast, Google Podcast and Amazon music

Featured tools of the week

- Dagster is a cloud-native orchestrator for the whole development lifecycle, with integrated lineage and observability, a declarative programming model, and best-in-class testability.

Dagster (Elementl) has raised a total of $15.8M in funding over 2 rounds. Their latest funding was raised on Nov 16, 2021 from a Series A round. - Coefficient is a no-code solution that allows business teams to work with real-time data directly from their spreadsheets. You can sync your Google Sheets to your company systems such as Salesforce, Hubspot, Google Analytics, Looker, MySQL, Redshift, Slack and more.

Coefficient has raised a total of $24.7M in funding over 3 rounds. Their latest funding was raised on Nov 10, 2022 from a Series A round.

Featured stack of the week

Instamojo is India's simplest online selling platform. It powers over 20lakh+ small, independent businesses, MSMEs, startups, and DTC brands with online stores, landing pages and online payment solutions to help them run their eCommerce business successfully.

Good reads and resources

- Build an Open Data Lakehouse with Spark, Delta and Trino on S3: Data lakes are often the first place collected data lands on to the data system and help in gaining insights from ever-growing data. But with data lakes organisation faces the challenge of data quality and governance. Data warehouses, on the other hand, often time is the final destination of analytical data but they have very limited support for unstructured data and SQL over ODBC/JDBC is not efficient for ML. Enter data lakehouse. A data lakehouse system tries to solve these challenges by combing the strengths of data lakes and warehouses.

In this article, Yifeng Jiang has explained the process of implementing a simple data lakehouse system using open-source software. This implementation can run with cloud data lakes like Amazon S3, or on-premise ones such as Pure Storage FlashBlade S3. - Does data need to come from a single source of truth? With the ever-growing volume of data, businesses are generating it seems impossible to have a single source of truth considering the size and diversity of data. For a long time, the overall objective of any data transformation has been to get all of an organisation’s data into one data warehouse that can provide a single source of truth. It’s very hard to figure out what data to centralise, analyse, and use if you don’t know how to find it, and as a result, many organisations are still reliant on fragmented data landscapes that are in a constant state of flux. Is it time to abandon the quest for a single source of data truth and, if so, what can organisations do to ensure trustworthy data from multiple different sources? Article by Brian McKenna.

Upcoming data events, webinars and summits

- Operational analytics club is organising a workshop on Data modeling: How to design a lasting business blueprint w/ Ergest Xheblati.

In this 90-minute workshop Ergest will demonstrate how to conceptually model a fictional SaaS business and demonstrate a physical data model for each of these approaches:

- One Big Table (OBT)

- Star schema (facts and dimensions)

- Data Vault / Anchor Model

- Activity Schema

Register here.

Data startup funding news of the week

- MotherDuck secures investment from Andreessen Horowitz to commercialize DuckDB: MotherDuck today announced that it raised $47.5 million across seed and Series A rounds, valuing the company at $175 million post-money. Redpoint led the seed while Andreessen Horowitz (a16z) led the Series A — other investors include Madrona, Amplify Partners and Altimeter.

MDS Jobs

- Mack Weldon is hiring 'Senior Analytics Engineer'

Location: NYC (hybrid)

Stack: Snowflake, dbt, Looker

Apply here - Coinlist is hiring a 'Senior Data Engineer'

Location: Remote / SF / NYC

Stack: DBT, Prefect, AWS

Apply here - ACLU is hiring a 'Director of Analytics Engineering'

Location: United States

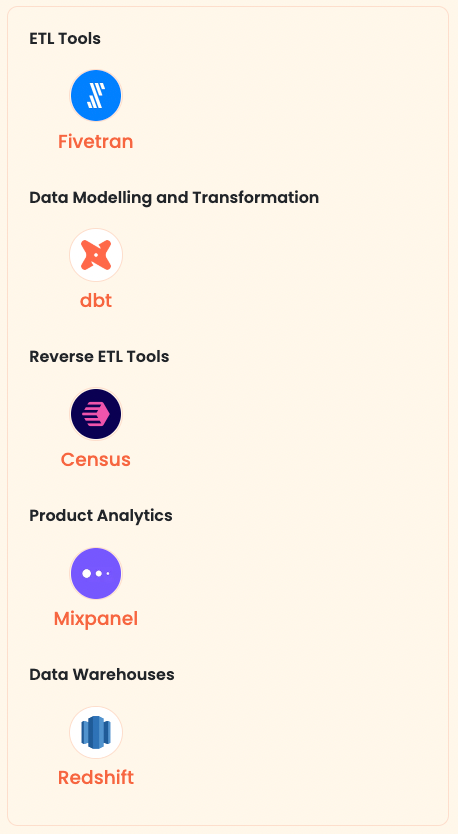

Stack: Fivetran, Redshift, dbt

Apply here

🔥 On Twitter

Just for fun 😃

Subscribe to our Newsletter, Follow us on Twitter and LinkedIn, and never miss data updates again.

What do you think about our weekly Newsletter?

Love it | It's great | Good | Okay-ish | Meh

If you have any suggestions, want us to feature an article, or list a data engineering job, hit us up! We would love to include it in our next edition😎

About Moderndatastack.xyz - We're building a platform to bring together people in the data community to learn everything about building and operating a Modern Data Stack. It's pretty cool - do check it out :)